Note

Access to this page requires authorization. You can try signing in or changing directories.

Access to this page requires authorization. You can try changing directories.

Important

This page includes instructions for managing Azure IoT Operations components using Kubernetes deployment manifests, which is in preview. This feature is provided with several limitations, and shouldn't be used for production workloads.

See the Supplemental Terms of Use for Microsoft Azure Previews for legal terms that apply to Azure features that are in beta, preview, or otherwise not yet released into general availability.

To send data to Microsoft Fabric Real-Time Intelligence from Azure IoT Operations, you can configure a data flow endpoint. This configuration allows you to specify the destination endpoint, authentication method, topic, and other settings.

Prerequisites

- An Azure IoT Operations instance

- Create a Fabric workspace. The default my workspace isn't supported.

- Create an event stream

- Add a custom endpoint as a source

Note

Event Stream supports multiple input sources including Azure Event Hubs. If you have an existing data flow to Azure Event Hubs, you can bring that into Fabric as shown in the Quickstart. This article shows you how to flow real-time data directly into Microsoft Fabric without any other hops in between.

Retrieve custom endpoint connection details

Retrieve the Kafka-compatible connection details for the custom endpoint. The connection details are used to configure the data flow endpoint in Azure IoT Operations.

This method uses the managed identity of the Azure IoT Operations instance to authenticate with the event stream. Use the system-assigned managed identity authentication methods to configure the data flow endpoint.

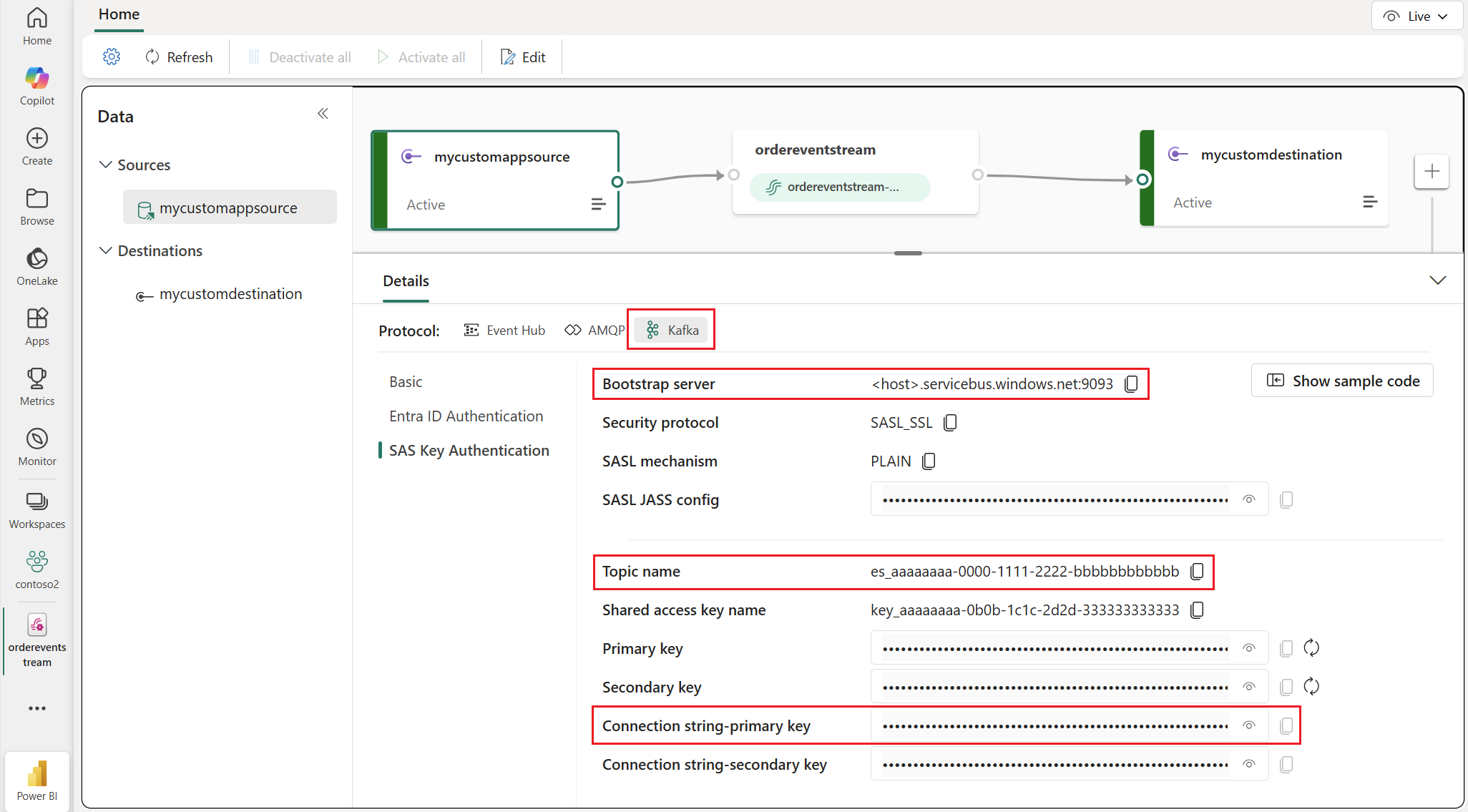

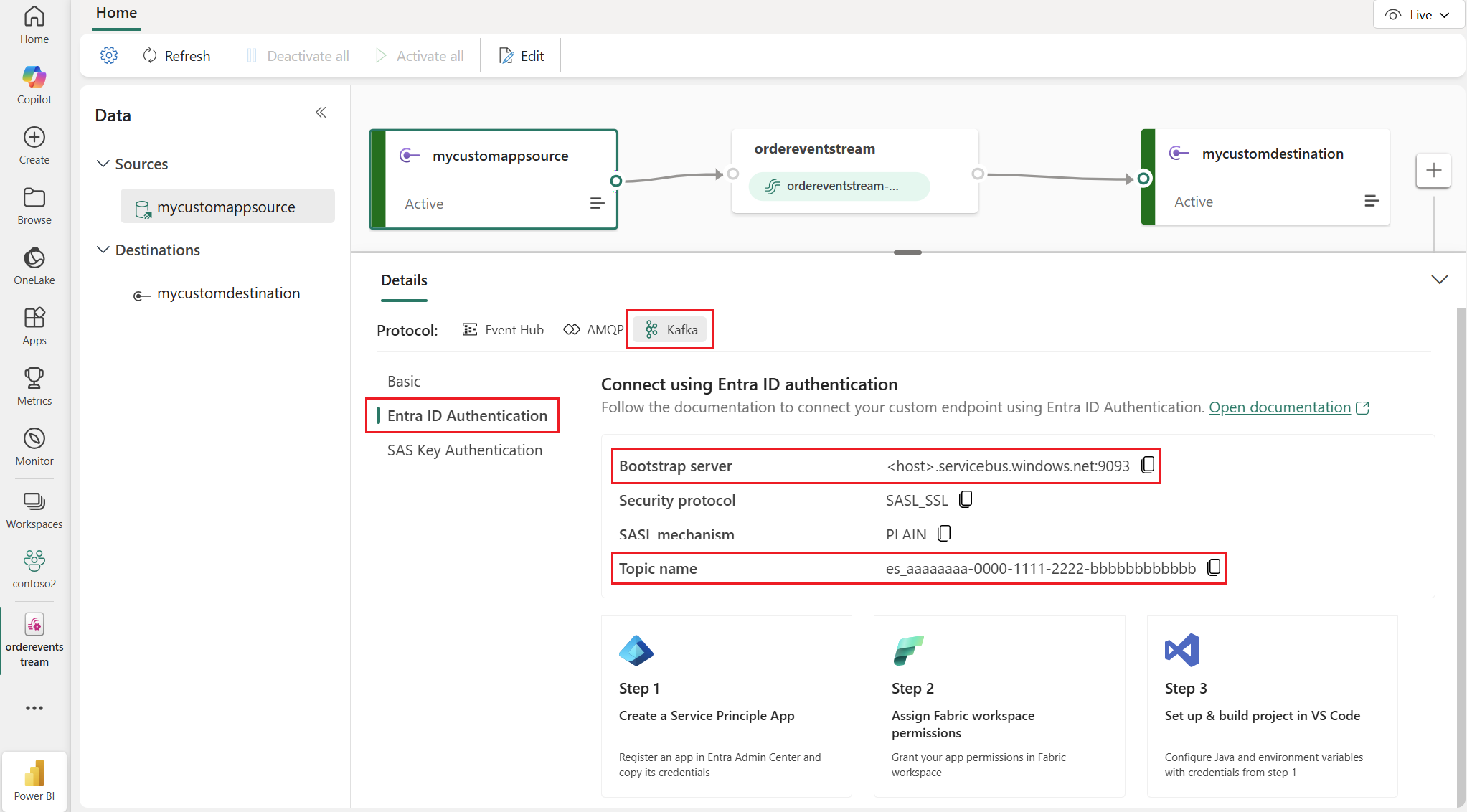

The connection details are in the Fabric portal under the Sources section of your event stream.

In the details panel for the custom endpoint, select Kafka protocol.

Select the Entra ID Authentication section to view the connection details.

Copy the details for the values for the Bootstrap server, Topic name, and Connection string-primary key. You use these values to configure the data flow endpoint.

Settings Description Bootstrap server The bootstrap server address is used for the hostname property in data flow endpoint. Topic name The event hub name is used as the Kafka topic and is of the the format es_aaaaaaaa-0000-1111-2222-bbbbbbbbbbbb.

Create a Microsoft Fabric Real-Time Intelligence data flow endpoint

Microsoft Fabric Real-Time Intelligence supports Simple Authentication and Security Layer (SASL), System-assigned managed identity, and User-assigned managed identity authentication methods. For details on the available authentication methods, see Available authentication methods.

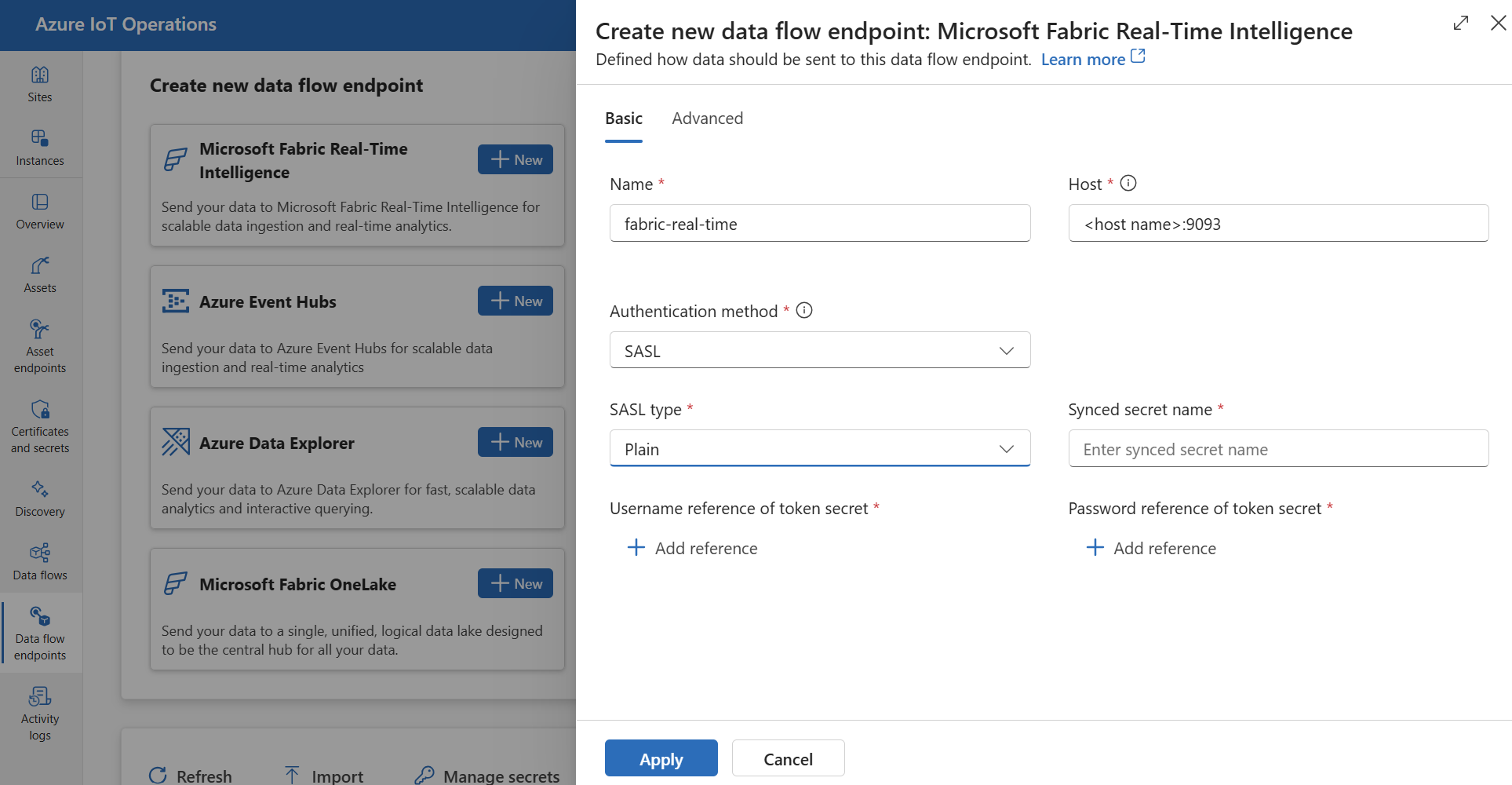

In the IoT Operations experience portal, select the Data flow endpoints tab.

Under Create new data flow endpoint, select Microsoft Fabric Real-Time Intelligence > New.

Enter the following settings for the endpoint.

Setting Description Name The name of the data flow endpoint. Host The hostname of the event stream custom endpoint in the format *.servicebus.windows.net:9093. Use the bootstrap server address noted previously.Authentication method The method used for authentication. Choose System assigned managed identity for Entra ID, User assigned managed identity, or SASL. If you choose the SASL authentication method, you must also enter the following settings:

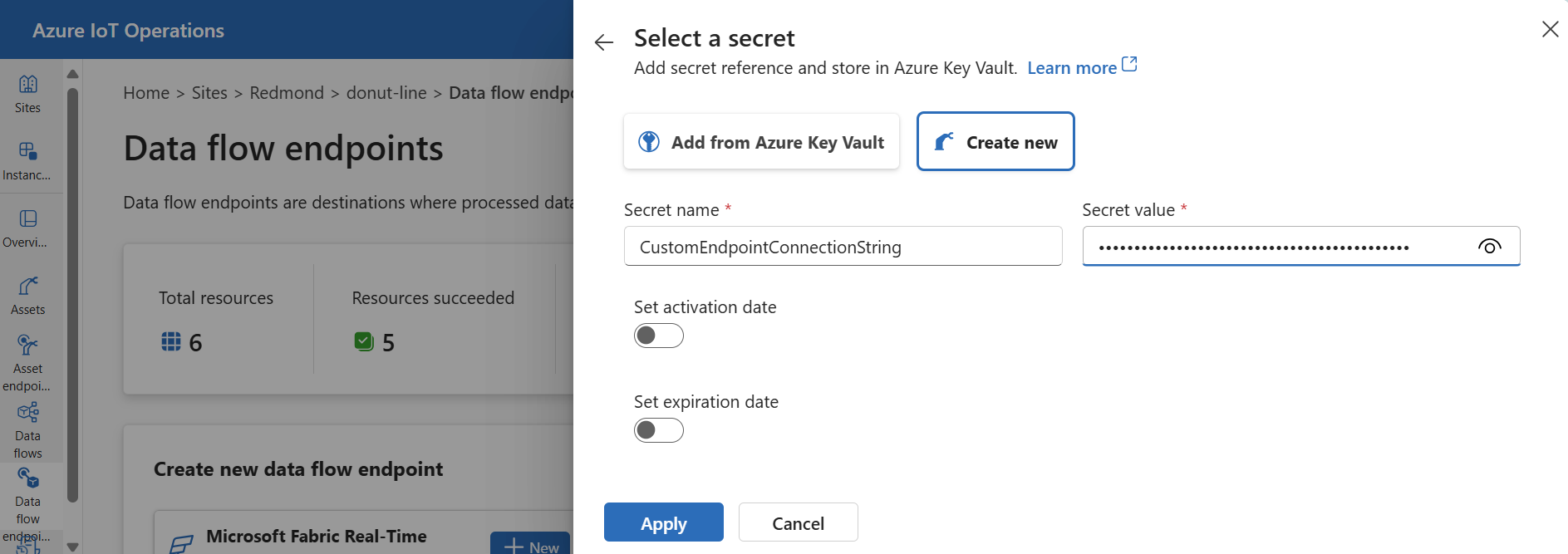

Setting Description SASL type Choose Plain. Synced secret name Enter a name for the synced secret. A Kubernetes secret with this name is created on the cluster. Select Add reference to create a new or choose an existing Key Vault reference for the username and password references.

For Username reference of token secret, the secret value must be exactly the literal string $ConnectionString not an environmentent variable reference.

For Password reference of token secret, the secret value must be the connection string with the primary key from the event stream custom endpoint.

Select Apply to provision the endpoint.

Available authentication methods

The following authentication methods are available for Fabric Real-Time Intelligence data flow endpoints.

System-assigned managed identity

Before you configure the data flow endpoint, assign a role to the Azure IoT Operations managed identity that grants permission to connect to the Kafka broker:

- In Azure portal, go to your Azure IoT Operations instance and select Overview.

- Copy the name of the extension listed after Azure IoT Operations Arc extension. For example, azure-iot-operations-xxxx7.

- Go to the cloud resource you need to grant permissions. For example, go to the Event Hubs namespace > Access control (IAM) > Add role assignment.

- On the Role tab, select an appropriate role.

- On the Members tab, for Assign access to, select User, group, or service principal option, then select + Select members and search for the Azure IoT Operations managed identity. For example, azure-iot-operations-xxxx7.

Then, configure the data flow endpoint with system-assigned managed identity settings.

In the operations experience data flow endpoint settings page, select the Basic tab then choose Authentication method > System assigned managed identity.

User-assigned managed identity

To use user-assigned managed identity for authentication, you must first deploy Azure IoT Operations with secure settings enabled. Then you need to set up a user-assigned managed identity for cloud connections. To learn more, see Enable secure settings in Azure IoT Operations deployment.

Before you configure the data flow endpoint, assign a role to the user-assigned managed identity that grants permission to connect to the Kafka broker:

- In Azure portal, go to the cloud resource you need to grant permissions. For example, go to the Event Grid namespace > Access control (IAM) > Add role assignment.

- On the Role tab, select an appropriate role.

- On the Members tab, for Assign access to, select Managed identity option, then select + Select members and search for your user-assigned managed identity.

Then, configure the data flow endpoint with user-assigned managed identity settings.

In the operations experience data flow endpoint settings page, select the Basic tab then choose Authentication method > User assigned managed identity.

SASL

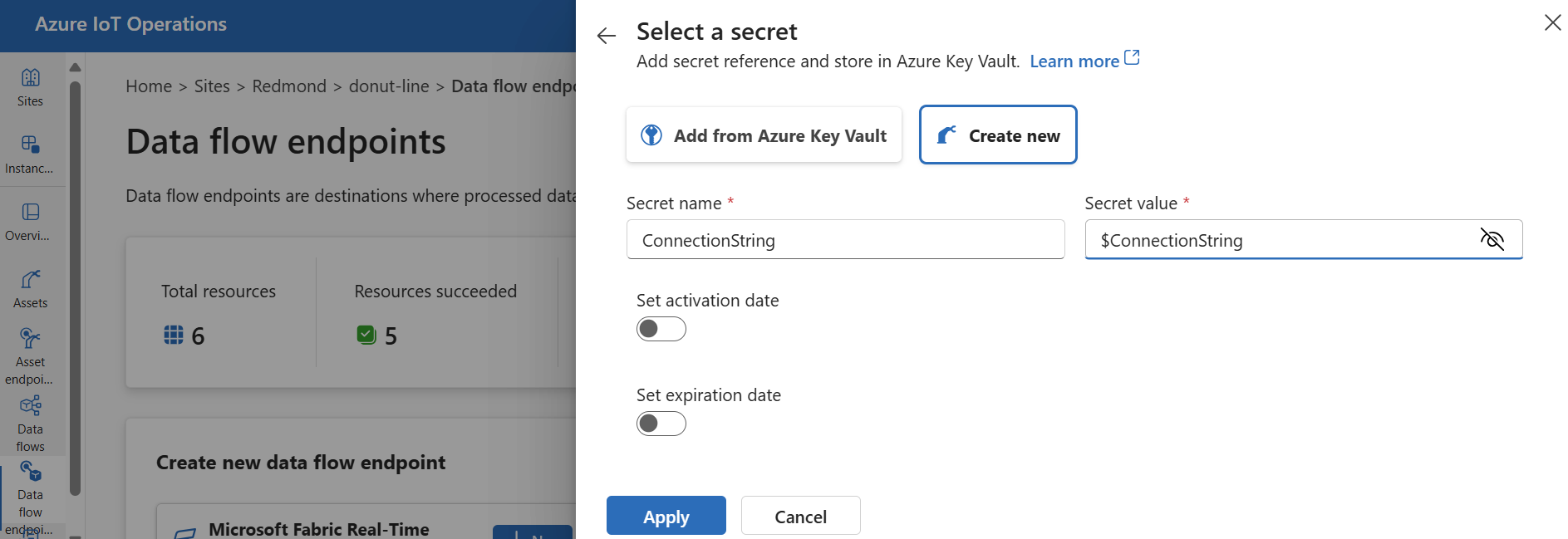

To use SASL for authentication, specify the SASL authentication method and configure SASL type and a secret reference with the name of the secret that contains the SASL token.

Azure Key Vault is the recommended way to sync the connection string to the Kubernetes cluster so that it can be referenced in the data flow. Secure settings must be enabled to configure this endpoint using the operations experience web UI.

In the operations experience data flow endpoint settings page, select the Basic tab then choose Authentication method > SASL.

Enter the following settings for the endpoint:

| Setting | Description |

|---|---|

| SASL type | The type of SASL authentication to use. Supported types are Plain, ScramSha256, and ScramSha512. |

| Synced secret name | The name of the Kubernetes secret that contains the SASL token. |

| Username reference or token secret | The reference to the username or token secret used for SASL authentication. |

| Password reference of token secret | The reference to the password or token secret used for SASL authentication. |

The supported SASL types are:

PlainScramSha256ScramSha512

The secret must be in the same namespace as the Kafka data flow endpoint. The secret must have the SASL token as a key-value pair.

Advanced settings

The advanced settings for this endpoint are identical to the advanced settings for Azure Event Hubs endpoints.

Next steps

To learn more about data flows, see Create a data flow.