Note

Access to this page requires authorization. You can try signing in or changing directories.

Access to this page requires authorization. You can try changing directories.

Use functional dependencies to clean data. A functional dependency exists when one column in a semantic model (a Power BI dataset) depends on another column. For example, a ZIP code column can determine the value in a city column. A functional dependency appears as a one-to-many relationship between values in two or more columns in a DataFrame. This tutorial uses the Synthea dataset to show how functional dependencies help detect data quality problems.

In this tutorial, you learn how to:

- Apply domain knowledge to form hypotheses about functional dependencies in a semantic model.

- Get familiar with components of the Semantic Link Python library (SemPy) that automate data quality analysis. These components include:

FabricDataFrame—a pandas-like structure with additional semantic information.- Functions that automate evaluating hypotheses about functional dependencies and identify violations in your semantic models.

Prerequisites

Get a Microsoft Fabric subscription. Or, sign up for a free Microsoft Fabric trial.

Sign in to Microsoft Fabric.

Use the experience switcher on the bottom left side of your home page to switch to Fabric.

- Select Workspaces in the navigation pane, then select your workspace to set it as the current workspace.

Follow along in the notebook

Use the data_cleaning_functional_dependencies_tutorial.ipynb notebook to follow this tutorial.

To open the accompanying notebook for this tutorial, follow the instructions in Prepare your system for data science tutorials to import the notebook to your workspace.

If you'd rather copy and paste the code from this page, you can create a new notebook.

Be sure to attach a lakehouse to the notebook before you start running code.

Set up the notebook

In this section, you set up a notebook environment.

Check your Spark version. If you're using Spark 3.4 or later in Microsoft Fabric, Semantic Link is included by default, so you don't need to install it. If you're using Spark 3.3 or earlier, or you want to update to the latest Semantic Link, run the following command.

%pip install -U semantic-linkImport the modules you use in this notebook.

import pandas as pd import sempy.fabric as fabric from sempy.fabric import FabricDataFrame from sempy.dependencies import plot_dependency_metadataDownload the sample data. In this tutorial, use the Synthea dataset of synthetic medical records (small version for simplicity).

download_synthea(which='small')

Explore the data

Initialize a

FabricDataFramewith the content of the providers.csv file.providers = FabricDataFrame(pd.read_csv("synthea/csv/providers.csv")) providers.head()Check for data quality issues with SemPy's

find_dependenciesfunction by plotting a graph of autodetected functional dependencies.deps = providers.find_dependencies() plot_dependency_metadata(deps)The graph shows that

IddeterminesNAMEandORGANIZATION. This result is expected becauseIdis unique.Confirm that

Idis unique.providers.Id.is_uniqueThe code returns

Trueto confirm thatIdis unique.

Analyze functional dependencies in depth

The functional dependencies graph also shows that ORGANIZATION determines ADDRESS and ZIP, as expected. However, you might expect ZIP to also determine CITY, but the dashed arrow indicates that the dependency is only approximate, pointing towards a data quality issue.

There are other peculiarities in the graph. For example, NAME doesn't determine GENDER, Id, SPECIALITY, or ORGANIZATION. Each of these peculiarities might be worth investigating.

- Take a deeper look at the approximate relationship between

ZIPandCITYby using SemPy'slist_dependency_violationsfunction to list the violations:

providers.list_dependency_violations('ZIP', 'CITY')

- Draw a graph with SemPy's

plot_dependency_violationsvisualization function. This graph is helpful if the number of violations is small:

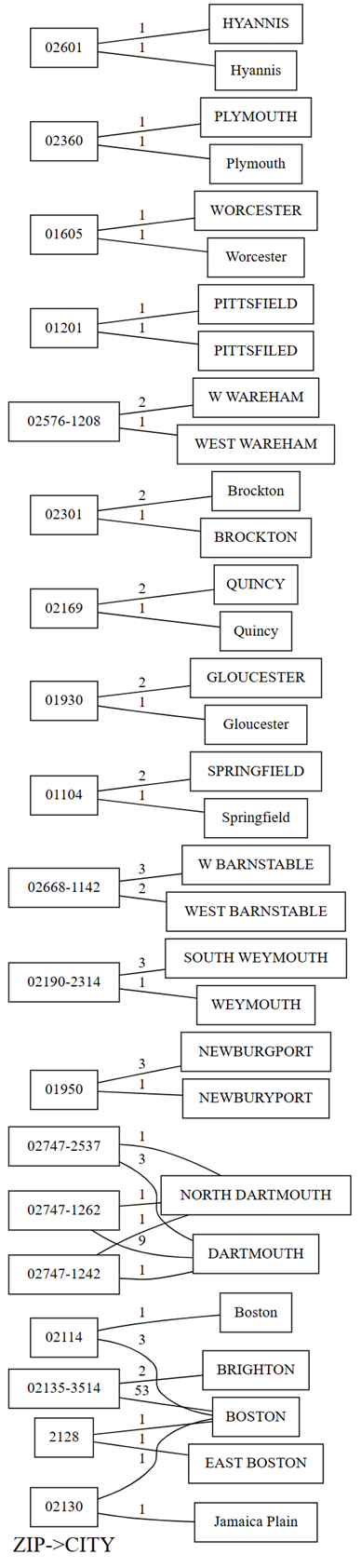

providers.plot_dependency_violations('ZIP', 'CITY')

The plot of dependency violations shows values for ZIP on the left hand side and values for CITY on the right hand side. An edge connects a zip code on the left hand side of the plot with a city on the right hand side if there's a row that contains these two values. The edges are annotated with the count of such rows. For example, there are two rows with zip code 02747-1242, one row with city "NORTH DARTHMOUTH" and the other with city "DARTHMOUTH", as shown in the previous plot and the following code:

- Confirm the observations from the plot by running the following code:

providers[providers.ZIP == '02747-1242'].CITY.value_counts()

The plot also shows that, among the rows that have

CITYas "DARTHMOUTH", nine rows have aZIPof 02747-1262. One row has aZIPof 02747-1242. One row has aZIPof 02747-2537. Confirm these observations with the following code:providers[providers.CITY == 'DARTHMOUTH'].ZIP.value_counts()There are other zip codes associated with "DARTMOUTH", but these zip codes aren't shown in the graph of dependency violations because they don't hint at data quality issues. For example, the zip code "02747-4302" is uniquely associated with "DARTMOUTH" and doesn't show up in the graph of dependency violations. Confirm by running the following code:

providers[providers.ZIP == '02747-4302'].CITY.value_counts()

Summarize data quality issues detected with SemPy

The dependency violations graph shows several data quality issues in this semantic model:

- Some city names are uppercase. Use string methods to fix this issue.

- Some city names have qualifiers (or prefixes), such as "North" and "East". For example, the ZIP Code "2128" maps to "EAST BOSTON" once and to "BOSTON" once. A similar issue occurs between "NORTH DARTMOUTH" and "DARTMOUTH". Drop these qualifiers or map the ZIP Codes to the city with the most common occurrence.

- There are typos in some city names, like "PITTSFIELD" vs. "PITTSFILED" and "NEWBURGPORT" vs. "NEWBURYPORT." For "NEWBURGPORT," fix this typo by using the most common occurrence. For "PITTSFIELD," with only one occurrence each, automatic disambiguation is much harder without external knowledge or a language model.

- Sometimes, prefixes like "West" are abbreviated to the single letter "W." Replace "W" with "West" if all occurrences of "W" stand for "West."

- The ZIP Code "02130" maps to "BOSTON" once and "Jamaica Plain" once. This issue isn't easy to fix. With more data, map to the most common occurrence.

Clean the data

Fix capitalization by changing values to title case.

providers['CITY'] = providers.CITY.str.title()Run violation detection again to confirm that there are fewer ambiguities.

providers.list_dependency_violations('ZIP', 'CITY')

Refine the data manually, or drop rows that violate functional constraints between columns by using SemPy's drop_dependency_violations function.

For each value of the determinant variable, drop_dependency_violations picks the most common value of the dependent variable and drops all rows with other values. Apply this operation only if you're confident that this statistical heuristic leads to correct results for your data. Otherwise, write your own code to handle the detected violations.

Run the

drop_dependency_violationsfunction on theZIPandCITYcolumns.providers_clean = providers.drop_dependency_violations('ZIP', 'CITY')List any dependency violations between

ZIPandCITY.providers_clean.list_dependency_violations('ZIP', 'CITY')

The code returns an empty list to indicate that there are no more violations of the functional constraint ZIP -> CITY.

Related content

See other tutorials for semantic link or SemPy:

- Tutorial: Analyze functional dependencies in a sample semantic model

- Tutorial: Extract and calculate Power BI measures from a Jupyter notebook

- Tutorial: Discover relationships in a semantic model using semantic link

- Tutorial: Discover relationships in the Synthea dataset using semantic link

- Tutorial: Validate data using SemPy and Great Expectations (GX)